Startups & Business News

Rhoda AI’s emergence from stealth with a $450 million Series A round and a $1.7 billion valuation signals how quickly investors are shifting from language-only AI toward systems that move hardware in messy factories and warehouses. The Palo Alto startup is pitching a foundation model for robots, trained on hundreds of millions of internet videos and refined with limited teleoperation data, as a way to push automation beyond tightly controlled cells and into variable industrial workflows. For manufacturers, logistics operators, and capital allocators, the question is whether this new class of “video-native” robot intelligence can move from promising pilots to scaled deployments fast enough to justify the capital flowing into the sector.

Rhoda AI’s funding and ambitions

Rhoda AI exited 18 months of stealth announcing its FutureVision robot intelligence platform and a $450 million Series A led by Premji Invest, with participation from Capricorn Investment Group, Khosla Ventures, Temasek, Mayfield, Leitmotif, Matter Venture Partners, Prelude Ventures, Xora, and others. The round values the company at roughly $1.7 billion, placing it among the most highly valued early-stage robotics and AI startups and reflecting expectations of a large, defensible platform play in industrial automation.

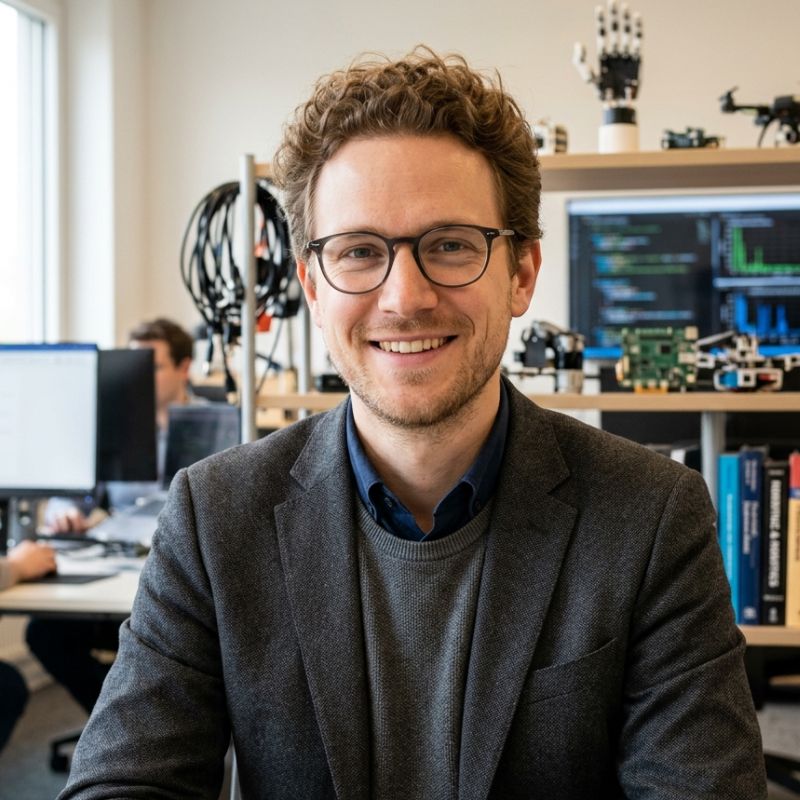

The company is led by CEO and cofounder Jagdeep Singh, a repeat deep-tech founder, alongside Chief Science Officer Eric Ryan Chan, Stanford professor Gordon Wetzstein, and a team with backgrounds in generative AI, computer vision, and robotics. This mix signals that Rhoda is positioning itself less as a traditional robot OEM and more as a model-centric infrastructure provider for manipulation across multiple hardware form factors.

How Direct Video Action is supposed to work

Rhoda’s core technical claim is a proprietary Direct Video Action (DVA) architecture that predicts future video frames and converts those predictions into robot actions in a closed loop. Instead of relying primarily on teleoperated trajectories, the company pre-trains on internet-scale video to learn motion, physics, and object interactions, then post-trains on smaller robot datasets to map predicted futures into specific joint commands.

The system continuously observes its environment, forecasts near-term states as video, chooses actions, executes them, and re-observes the world every few hundred milliseconds, updating behavior as conditions change. Rhoda argues that this natively autoregressive, feedback-driven setup allows robots to handle shifting layouts, previously unseen objects, and other real-world perturbations that often break open-loop or purely scripted systems. According to the company, the strong motion prior from video training reduces the amount of robot-specific data needed, with some tasks reportedly learnable from roughly ten hours of teleoperation.

From lab demos to production lines

Traditional industrial robots excel at repeatable operations—welding, painting, palletizing—within fixed workcells, but they struggle with high-mix, low-volume tasks and unstructured material flows. Recent vision-language-action research has shown compelling lab demos, yet many systems still fail when confronted with the variability of live production, from inconsistent parts presentation to upstream schedule disruptions.

Rhoda states that its technology has already run autonomously in production environments, including a high-volume manufacturing evaluation where its system completed a component-processing workflow in under two minutes per cycle without human intervention and exceeded customer KPIs. Investor Jens Wiese, a former Volkswagen Group executive, framed the impact as expanding the scope of tasks that can be automated, particularly those with historically high variability. In practice, that means targeting operations that today depend on skilled human technicians—complex assembly, kitting, rework—rather than only the most structured stations.

Business model: platform first, hardware optional

Rhoda describes FutureVision as an intelligence layer that powers its own systems today and will increasingly be licensed across partners’ hardware and software platforms. The company has also indicated that it is developing its own robotics hardware, including humanoid or humanoid-adjacent platforms, to ensure that the model can be exercised against demanding manipulation workloads in production environments.

That dual approach—platform licensing plus branded systems—matches patterns seen in other frontier robotics players that aim to capture both software economics and showcase deployments. For industrial customers, the key questions will be integration friction with existing PLCs, MES, and safety architectures, as well as how quickly Rhoda’s model can be adapted to their preferred robot arms, grippers, and mobile bases. Licensing revenue and long-term support contracts will depend on predictable performance across heterogeneous fleets rather than bespoke, one-off implementations.

Competitive and ecosystem context

Rhoda enters an increasingly crowded field of companies chasing “general” robotic manipulation, including firms developing humanoid robots, full-stack mobile manipulators, and AI control software layers for incumbent hardware. Several peers are also leveraging large-scale video and multimodal data, but Rhoda’s emphasis on Direct Video Action and closed-loop video predictive control differentiates its narrative from language-driven instruction-following alone.

The funding round comes amid a broader capital rotation into robotics platforms seen as leverage points for labor-constrained manufacturing and logistics operations. Indexes of recent Series A activity show robotics and industrial AI accounting for a significant share of US venture dollars, and investors like Premji Invest explicitly frame Rhoda as a candidate to build a “large, enduring business” via a data flywheel from scaled deployments. That flywheel depends on getting enough robots into real-world workflows to encounter the long tail of edge cases and continuously retrain models.

Deployment realities and open questions

Despite the strong capital backing, the company remains early in commercial rollout, with references to “industrial deployments and customer pilots” rather than broad production fleets. In many factories, introducing a model-driven manipulation layer still requires months of systems integration, safety validation, operator training, and change management before the first cycle is run under real takt constraints.

Key unknowns for operators and investors include:

Total cost of ownership compared with traditional automation cells and human labor.

Reliability over multi-shift operations, including failure modes when predictions are wrong or sensors degrade.

How quickly FutureVision can be retuned when product variants, fixtures, or layouts change.

Cybersecurity and IP protection when models are trained on in-facility data.

Rhoda points to early results and investor testimonials as evidence that its system can handle variability better than conventional approaches, but independently verified performance data across multiple sites and verticals has yet to emerge publicly. For now, its value proposition rests on the combination of a video-native foundation model, closed-loop control, and a war chest large enough to fund extensive pilots and iterative product work with flagship customers.

Near-term outlook and longer-term stakes

In the near term, Rhoda’s priority will be converting its $450 million round into credible reference deployments in manufacturing and logistics, where cycle times, uptime, and quality metrics can be benchmarked against incumbent automation and human operators. Success will likely depend on close collaboration with systems integrators and OEM partners that understand brownfield constraints and can package FutureVision as part of end-to-end solutions.

Over the longer term, if Rhoda or similar platforms prove that video-pretrained, closed-loop models can generalize across tasks and sites, the economics of robotics could shift toward software-centric scaling, with model updates delivering step-change improvements to installed bases. That dynamic would favor companies that reach deployment scale early and accumulate the richest distribution of real-world interaction data. For founders, operators, and investors tracking the industrial AI stack, Rhoda’s launch is less a one-off funding headline than a test of whether foundation models for the physical world can finally move robots out of the lab and into the core of production.

Discover the companies and startups shaping tomorrow — explore the future of technology today.

Most Popular

Trending Companies

Latest Articles

Reliable Robotics Secures $160M to Scale Autonomous Aircraft Systems for Commercial Deployment

Reliable Robotics has secured $160M to scale production and deployment of its Reliable Autonomy System. This funding marks a pivotal

Ricursive Superintelligence’s $500M bet on self-improving AI raises the stakes in the AGI funding race

Excerpt: Ricursive Superintelligence has raised at least $500 million to build self‑improving AI, with GV and Nvidia backing a four‑month‑old

Bond AI raises US$2M to automate SME accounting in Brazil’s brutal tax maze

Brazilian startup BOND has raised US$2M to automate accounting for SMEs in Brazil’s complex tax system. Combining AI with human

Loop’s AI supply chain platform raises $95M Series C to predict disruptions before they hit

Loop just raised a $95M Series C to expand its AI-native supply chain platform, turning messy logistics data into early

London AI startup Lua wants to give every business its own AI workforce with $5.8M seed round

Linkedin X-twitter-square Facebook-square Startups & Business News AI agents are finally moving from demos to the day-to-day stack of real

Factory.ai raises $150M Series C to build fully autonomous software engineering “factories”

Factory has raised a $150M Series C at a $1.5B valuation to scale its autonomous “Droids” platform, betting that enterprises

Solidroad’s $25M bet on AI quality assurance for customer support

Solidroad has raised $25 million to bring AI-native quality assurance to every human and AI-powered customer interaction. The new funding

Turion Space’s $75M Series B bets on space domain awareness as the next defense infrastructure

Turion Space has raised more than $75 million in Series B funding to scale its Starfire platform and satellite fleet,

Mintlify’s $45M Series B Bet: Turning Documentation Into AI Infrastructure

Mintlify just raised a $45M Series B led by a16z and Salesforce Ventures to turn software documentation into core AI

nEye.ai’s $80M bet on optical circuit switching for AI data centers

nEye.ai has raised an $80 million Series C to scale optical circuit switching for AI data centers. This feature unpacks

Bluefish’s $43M Agentic Marketing Bet: How Fortune 500 Brands Are Fighting for AI Shelf Space

Bluefish has raised a $43 million Series B to expand its agentic marketing platform, giving Fortune 500 brands new tools

Anvil Robotics’ Physical AI Platform Targets Modular Robotics Infrastructure Bottleneck

Anvil Robotics is building a physical AI modular robotics platform that replaces fragmented, bespoke stacks with composable hardware, software, and

futureTEKnow is focused on identifying and promoting creators, disruptors and innovators, and serving as a vital resource for those interested in the latest advancements in technology.

© 2026 All Rights Reserved.